In a long list of plots that Martin wants me to add to the paper draft I sent out last week, the following:

Is it possible to show the histograms projected along each axis in addition to the 2D density? I know people do this in IDL frequently, I'm not sure how to do this in matplotlib. If it's possible we could play a similar game with the L-z figure. The 1D histograms contain lots of useful information, including how significant our clustering detection is in each bin.

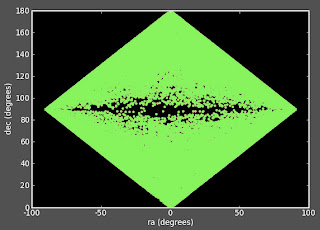

Ask and you shall receive Martin. Below is the "money plot" (the temperature 2D histogram which shows amplitudes the bright versus dim correlation function) with histograms on the side of either axis.

There are some cool features that I'll describe in the comments below. I think this plot rocks.

import numpy as np

import matplotlib.pyplot as plt

from matplotlib.ticker import NullFormatter

def makeTempHistogramPlot(xdata,ydata,rexp,filename=None,xlims=-99, ylims =-99 , \

nxbins = 50,nybins=50, bw=0, nbins=100,contours=1,sigma=1,line=1):

#bw = 0 for color, = 1 for black and white

#line = 0 for no line, =1 for line

#sigma = 1 for display % below line, =0 for not

#contours = 1 for display 1,2,3 sigma contours, = 0 for not.

# Define the x and y data

x = xdata

y = ydata

# Set up default x and y limits

if (xlims == -99): xlims = [0,max(x)]

if (ylims == -99): ylims = [0,max(y)]

# Set up your x and y labels

xlabel = '$\mathrm{Your\\ X\\ Label}$'

ylabel = '$\mathrm{Your\\ X\\ Label}$'

mtitle = ''

# Define the locations for the axes

left, width = 0.12, 0.55

bottom, height = 0.12, 0.55

bottom_h = left_h = left+width+0.02

# Set up the geometry of the three plots

rect_temperature = [left, bottom, width, height] # dimensions of temp plot

rect_histx = [left, bottom_h, width, 0.25] # dimensions of x-histogram

rect_histy = [left_h, bottom, 0.25, height] # dimensions of y-histogram

# Set up the size of the figure

fig = plt.figure(1, figsize=(9.5,9))

# Make the three plots

axTemperature = plt.axes(rect_temperature) # temperature plot

axHistx = plt.axes(rect_histx) # x histogram

axHisty = plt.axes(rect_histy) # y histogram

# Remove the inner axes numbers of the histograms

nullfmt = NullFormatter()

axHistx.xaxis.set_major_formatter(nullfmt)

axHisty.yaxis.set_major_formatter(nullfmt)

# Find the min/max of the data

xmin = min(xlims)

xmax = max(xlims)

ymin = min(ylims)

ymax = max(y)

# Make the 'main' temperature plot

xbins = linspace(start = 0, stop = xmax, num = nxbins)

ybins = linspace(start = 0, stop = ymax, num = nybins)

xcenter = (xbins[0:-1]+xbins[1:])/2.0

ycenter = (ybins[0:-1]+ybins[1:])/2.0

aspectratio = 1.0*(xmax - 0)/(1.0*ymax - 0)

H, xedges,yedges = N.histogram2d(y,x,bins=(ybins,xbins))

X = xcenter

Y = ycenter

Z = H

# Plot the temperature data

if(bw): cax = axTemperature.imshow(H, extent=[xmin,xmax,ymin,ymax], \

interpolation='nearest', origin='lower',aspect=aspectratio, cmap=cm.gist_yarg)

else : cax = axTemperature.imshow(H, extent=[xmin,xmax,ymin,ymax], \

interpolation='nearest', origin='lower',aspect=aspectratio)

# Plot the temperature plot contours

if(bw): contourcolor = 'black'

else: contourcolor = 'white'

if (contours==0):

print ''

elif (contours==1):

xcenter = N.mean(x)

ycenter = N.mean(y)

ra = N.std(x)

rb = N.std(y)

ang = 0

X,Y=ellipse(ra,rb,ang,xcenter,ycenter)

axTemperature.plot(X,Y,"k:",ms=1,linewidth=2.0)

axTemperature.annotate('$1\\sigma$', xy=(X[15], Y[15]), xycoords='data',xytext=(10, 10), textcoords='offset points',horizontalalignment='right', verticalalignment='bottom',fontsize=25)

X,Y=ellipse(2*ra,2*rb,ang,xcenter,ycenter)

axTemperature.plot(X,Y,"k:",color = contourcolor,ms=1,linewidth=2.0)

axTemperature.annotate('$2\\sigma$', xy=(X[15], Y[15]), xycoords='data',xytext=(10, 10), textcoords='offset points',horizontalalignment='right', verticalalignment='bottom',fontsize=25, color = contourcolor)

X,Y=ellipse(3*ra,3*rb,ang,xcenter,ycenter)

axTemperature.plot(X,Y,"k:",color = contourcolor, ms=1,linewidth=2.0)

axTemperature.annotate('$3\\sigma$', xy=(X[15], Y[15]), xycoords='data',xytext=(10, 10), textcoords='offset points',horizontalalignment='right', verticalalignment='bottom',fontsize=25, color = contourcolor)

else:

xcenter = N.mean(x)

ycenter = N.mean(y)

ra = N.std(x)

rb = N.std(y)

ang = contours*N.pi/180.0

X,Y=ellipse(ra,rb,ang,xcenter,ycenter)

axTemperature.plot(X,Y,"k:",ms=1,linewidth=2.0)

axTemperature.annotate('$1\\sigma$', xy=(X[15], Y[15]), xycoords='data', xytext=(10, 10), textcoords='offset points',horizontalalignment='right', verticalalignment='bottom',fontsize=25)

X,Y=ellipse(2*ra,2*rb,ang,xcenter,ycenter)

axTemperature.plot(X,Y,"k:",ms=1,linewidth=2.0, color = contourcolor)

axTemperature.annotate('$2\\sigma$', xy=(X[15], Y[15]), xycoords='data', xytext=(10, 10), textcoords='offset points',horizontalalignment='right', verticalalignment='bottom',fontsize=25, color = contourcolor)

X,Y=ellipse(3*ra,3*rb,ang,xcenter,ycenter)

axTemperature.plot(X,Y,"k:",ms=1,linewidth=2.0, color = contourcolor)

axTemperature.annotate('$3\\sigma$', xy=(X[15], Y[15]), xycoords='data', xytext=(10, 10), textcoords='offset points',horizontalalignment='right', verticalalignment='bottom',fontsize=25, color = contourcolor)

#Plot the % below line

belowline = 1.0*size(where((x - y) > 0.0))/size(x)*1.0*100

if(sigma): axTemperature.annotate('$%.2f\%%\mathrm{\\ Below\\ Line}$'%(belowline), xy=(xmax-100, ymin+3),fontsize=20, color = contourcolor)

#Plot the axes labels

axTemperature.set_xlabel(xlabel,fontsize=25)

axTemperature.set_ylabel(ylabel,fontsize=25)

#Make the tickmarks pretty

ticklabels = axTemperature.get_xticklabels()

for label in ticklabels:

label.set_fontsize(18)

label.set_family('serif')

ticklabels = axTemperature.get_yticklabels()

for label in ticklabels:

label.set_fontsize(18)

label.set_family('serif')

#Plot the line on the temperature plot

if(line): axTemperature.plot([-1000,1000], [-1000,1000], 'k-', linewidth=2.0, color = contourcolor)

#Set up the plot limits

axTemperature.set_xlim(xlims)

axTemperature.set_ylim(ylims)

#Set up the histogram bins

xbins = N.arange(xmin, xmax, (xmax-xmin)/nbins)

ybins = N.arange(ymin, ymax, (ymax-ymin)/nbins)

#Plot the histograms

if (bw):

axHistx.hist(x, bins=xbins, color = 'silver')

axHisty.hist(y, bins=ybins, orientation='horizontal', color = 'dimgray')

else:

axHistx.hist(x, bins=xbins, color = 'blue')

axHisty.hist(y, bins=ybins, orientation='horizontal', color = 'red')

#Set up the histogram limits

axHistx.set_xlim( 0, max(x) )

axHisty.set_ylim( 0, max(y))

#Make the tickmarks pretty

ticklabels = axHistx.get_yticklabels()

for label in ticklabels:

label.set_fontsize(12)

label.set_family('serif')

#Make the tickmarks pretty

ticklabels = axHisty.get_xticklabels()

for label in ticklabels:

label.set_fontsize(12)

label.set_family('serif')

#Cool trick that changes the number of tickmarks for the histogram axes

axHisty.xaxis.set_major_locator(MaxNLocator(4))

axHistx.yaxis.set_major_locator(MaxNLocator(4))

if(filename):

savefig(filename + '.eps',format = 'eps', transparent=True)

savefig(filename + '.pdf',format = 'pdf', transparent=True)

savefig(filename + '.png',format = 'png', transparent=True)

return 0