Adam rocks. He helped me figure out a way to parallelize the calculations of my correlation functions to that they all happen at once. Hopefully this will speed up my code by a factor of 10-20. Here is how it works:

A while ago I posted about how to use qsub to run scripts. I've now change my python code to call qsub within the program which calculates the correlation functions.

I have the following script:

runcrossCorr.script

~~~~~~~~~~~~~~

#PBS -l nodes=1

#PBS -N JessCC$job

#PBS -j oe

#PBS -m ea

#PBS -M jakirkpatrick@lbl.gov

#PBS -o qsub.out

cd /home/jessica/repository/ccpzcalib/Jessica/pythonJess

echo "$corr2dfile $argumentfile > $outfile"

$corr2dfile $argumentfile > $outfile

~~~~~~~~~~~~~~

this script is called using the following command:

qsub -v job=0, corr2dfile=./2dcorrFinal, argumentfile=./run2010129_237/wpsInputs0, outfile=./run2010129_237/wps0.dat runcrossCorr.script

where -v basically sets up environment variables for the qsub command so that within the script (in this example)

$job is replaced with 0

$corr2dfile is replaced with ./2dcorrFinal

$argumentfile is replaced with /run2010129_237/wpsInputs0

$outfile is replaced with ./run2010129_237/wps0.dat runcrossCorr.script

Many of these qsub commands can be run in python and they all are sent to the queue and run in parallel on different cluster computers.

I've done this so that python automatically changes the argumentfile and outfile and runs all the correlation functions at once.

These correspond to updated versions of my python functions runcrossCorr and runautoCorr in the correlationData library.

Look at all my jobs running (type qstat into terminal)!

And it even emails me when it's done!

So, now all I do is make a python script that runs all the code and set it going in the background:

python 100128run.py 100128.out 2> 100128.err &

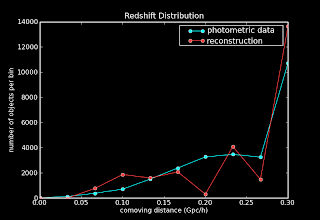

I'm running with 1,000,000 points and finer redshift binning. Hopefully this will work even better!